The LLM solution for 95%+ automation in term matching with MedDRA

For a Forbes Global 2000 client, we automated the process of matching adverse event descriptions from clinical reports with standardized MedDRA vocabulary — achieving over 95% automation, including terms that specialists previously failed to map manually. Faster and more accurate processing of clinical data reduces R&D costs, accelerates regulatory submissions, and ultimately supports faster delivery of new treatments.

The challenge: complex terminology and a manual bottleneck

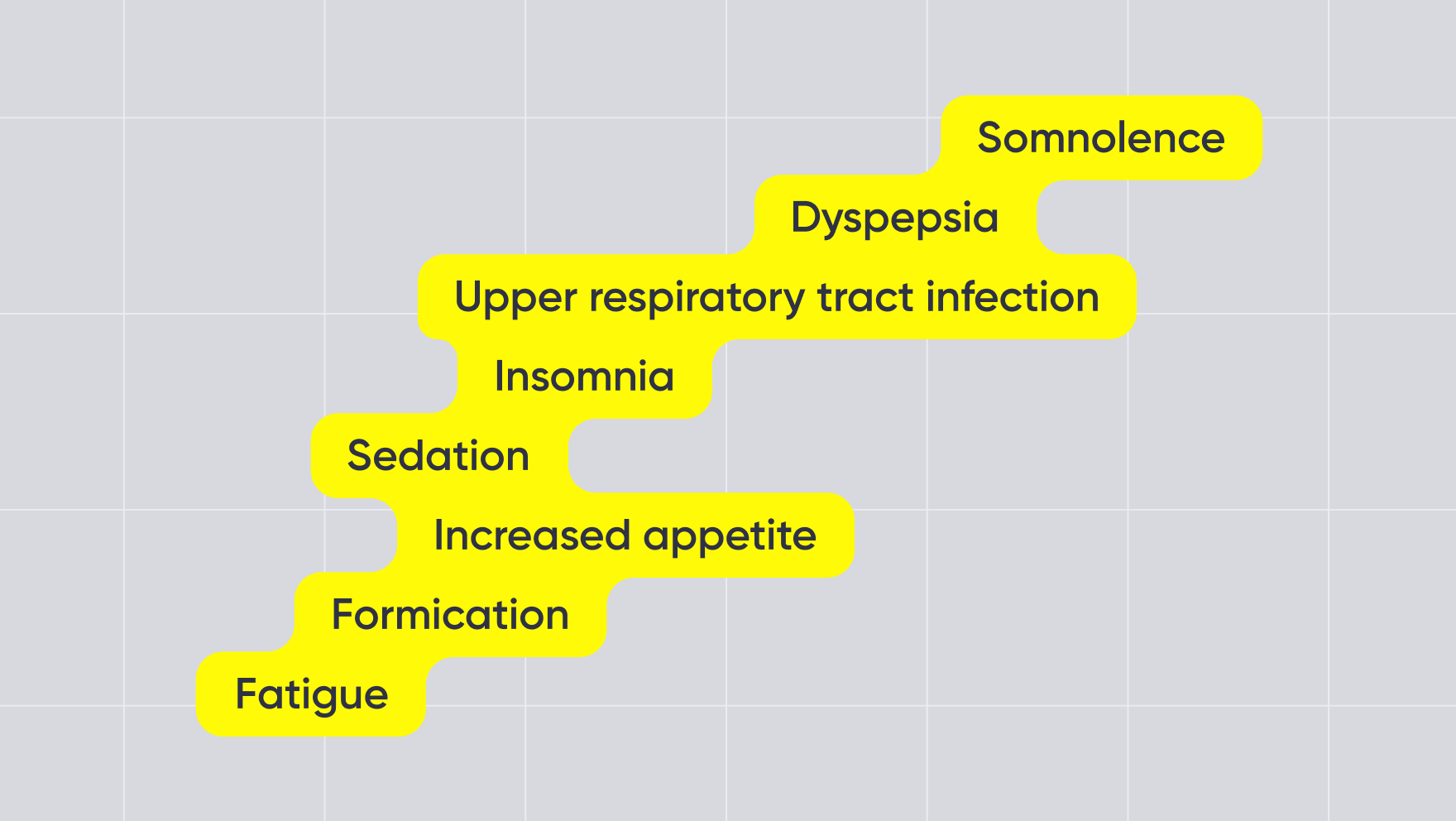

The client was manually converting unstructured medical terms from clinical study records into standardized MedDRA (Medical Dictionary for Regulatory Activities) codes — the global regulatory standard for pharmacovigilance and drug safety reporting. Medical descriptions are often multi-word, ambiguous, and context-dependent. Manual mapping required qualified experts and could take up to two weeks per study, creating a serious bottleneck in the data pipeline. Rule-based matching and traditional NLP approaches proved insufficient. They rely on rigid string comparison or predefined synonym lists, which fail when terminology varies semantically. This task required contextual understanding — the ability to interpret meaning, not just match patterns. The company needed a reliable, automated standardization mechanism embedded directly into its existing ETL flows.

The solution: LLM-powered MedDRA mapping with strict validation

We began with a focused AI Readiness Workshop involving both technical and business stakeholders. In three hours, we:

- analyzed the existing data architecture and constraints

- identified integration risks

- selected the simplest viable implementation approach

Instead of redesigning the workflow, we introduced a lightweight automated mapping step embedded directly into the existing ETL pipeline. The solution combines:

- LLM-based semantic interpretation of medical terms

- Dataiku for orchestration and pipeline control

- API-based integration with internal systems

- Multi-stage validation against the official MedDRA dictionary

At its core, an LLM-powered service interprets free-text medical terms and proposes standardized MedDRA matches based on semantic meaning. Unlike rule-based systems, the model evaluates context, allowing it to handle non-standard phrasing and rare variations.

Accuracy control and hallucination mitigation

To ensure reliability, every LLM-generated term is automatically cross-checked against the official MedDRA dictionary. If the proposed term does not exist in MedDRA, it is rejected. The system therefore produces only validated outputs — or no output at all. If no valid match is found, the term is automatically queued for reprocessing in the next pipeline cycle. Terms that remain unresolved after repeated attempts are flagged for expert review. Before production rollout, domain experts validated the system. At this stage:

- Over 95% of terms were mapped automatically

- The system successfully standardized terms that specialists had previously been unable to classify manually

Integration, reliability, and security

The solution operates as a scheduled component within the client’s production ETL pipelines. Batches of new medical terms are sent via REST API to the Dataiku environment, processed, validated, and written into Snowflake for downstream regulatory and analytical use. The process runs overnight, so teams start each business day with clean, validated, standardized data. The system processes only study identifiers and medical terminology. It does not handle personal patient data and does not transmit data outside the organization, ensuring compliance with internal security policies.

Results

95%+ automation — including previously unresolved expert cases — eliminated a critical operational bottleneck in pharmacovigilance reporting. The impact:

- Standardization time reduced from up to two weeks to near real time

- Near-100% validated accuracy

- Faster pharmacovigilance reporting cycles

- Seamless integration with existing ETL, Dataiku, and Snowflake infrastructure

What was once a slow, expert-dependent process is now a scalable, automated background workflow that runs overnight and delivers standardized results every morning.

Earlier, we described how we built a GenAI search platform for a major pharma company, turning days of manual research across scientific sources into a process that takes minutes. Read the full story here.